#125 – Joan Rohlfing on how to avoid catastrophic nuclear blunders

Since the Soviet Union split into different countries in 1991, the pervasive fear of catastrophe that people lived with for decades has gradually faded from memory, and nuclear warhead stockpiles have...

29 Mars 20222h 13min

#124 – Karen Levy on fads and misaligned incentives in global development, and scaling deworming to reach hundreds of millions

If someone said a global health and development programme was sustainable, participatory, and holistic, you'd have to guess that they were saying something positive. But according to today's guest Kar...

21 Mars 20223h 9min

#123 – Samuel Charap on why Putin invaded Ukraine, the risk of escalation, and how to prevent disaster

Russia's invasion of Ukraine is devastating the lives of Ukrainians, and so long as it continues there's a risk that the conflict could escalate to include other countries or the use of nuclear weapon...

14 Mars 202259min

#122 – Michelle Hutchinson & Habiba Islam on balancing competing priorities and other themes from our 1-on-1 careers advising

One of 80,000 Hours' main services is our free one-on-one careers advising, which we provide to around 1,000 people a year. Today we speak to two of our advisors, who have each spoken to hundreds of p...

9 Mars 20221h 36min

Introducing 80k After Hours

Today we're launching a new podcast called 80k After Hours. Like this show it’ll mostly still explore the best ways to do good — and some episodes will be even more laser-focused on careers than mos...

1 Mars 202213min

#121 – Matthew Yglesias on avoiding the pundit's fallacy and how much military intervention can be used for good

If you read polls saying that the public supports a carbon tax, should you believe them? According to today's guest — journalist and blogger Matthew Yglesias — it's complicated, but probably not. Link...

16 Feb 20223h 4min

#120 – Audrey Tang on what we can learn from Taiwan’s experiments with how to do democracy

In 2014 Taiwan was rocked by mass protests against a proposed trade agreement with China that was about to be agreed without the usual Parliamentary hearings. Students invaded and took over the Parlia...

2 Feb 20222h 5min

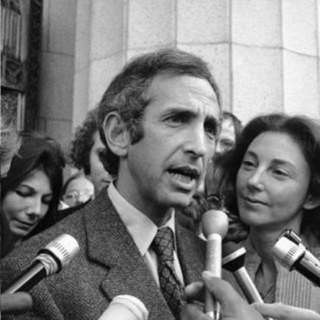

#43 Classic episode - Daniel Ellsberg on the institutional insanity that maintains nuclear doomsday machines

Rebroadcast: this episode was originally released in September 2018.In Stanley Kubrick’s iconic film Dr. Strangelove, the American president is informed that the Soviet Union has created a secret dete...

18 Jan 20222h 35min