Inside the Biden admin’s AI policy approach | Jake Sullivan, Biden’s NSA | via The Cognitive Revolution

Jake Sullivan was the US National Security Advisor from 2021-2025. He joined our friends on The Cognitive Revolution podcast in August to discuss AI as a critical national security issue. We thought i...

26 Sep 20251h 5min

#223 – Neel Nanda on leading a Google DeepMind team at 26 – and advice if you want to work at an AI company (part 2)

At 26, Neel Nanda leads an AI safety team at Google DeepMind, has published dozens of influential papers, and mentored 50 junior researchers — seven of whom now work at major AI companies. His secret?...

15 Sep 20251h 46min

#222 – Can we tell if an AI is loyal by reading its mind? DeepMind's Neel Nanda (part 1)

We don’t know how AIs think or why they do what they do. Or at least, we don’t know much. That fact is only becoming more troubling as AIs grow more capable and appear on track to wield enormous cultu...

8 Sep 20253h 1min

#221 – Kyle Fish on the most bizarre findings from 5 AI welfare experiments

What happens when you lock two AI systems in a room together and tell them they can discuss anything they want?According to experiments run by Kyle Fish — Anthropic’s first AI welfare researcher — som...

28 Aug 20252h 28min

How not to lose your job to AI (article by Benjamin Todd)

About half of people are worried they’ll lose their job to AI. They’re right to be concerned: AI can now complete real-world coding tasks on GitHub, generate photorealistic video, drive a taxi more sa...

31 Juli 202551min

#220 – Ryan Greenblatt on the 4 most likely ways for AI to take over, and the case for and against AGI in <8 years

Ryan Greenblatt — lead author on the explosive paper “Alignment faking in large language models” and chief scientist at Redwood Research — thinks there’s a 25% chance that within four years, AI will b...

8 Juli 20252h 50min

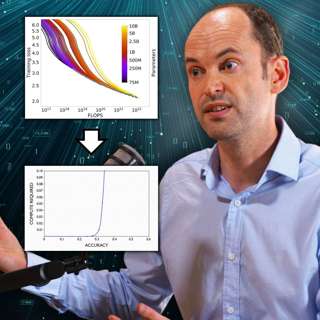

#219 – Toby Ord on graphs AI companies would prefer you didn't (fully) understand

The era of making AI smarter just by making it bigger is ending. But that doesn’t mean progress is slowing down — far from it. AI models continue to get much more powerful, just using very different m...

24 Juni 20252h 48min