#223 – Neel Nanda on leading a Google DeepMind team at 26 – and advice if you want to work at an AI company (part 2)

At 26, Neel Nanda leads an AI safety team at Google DeepMind, has published dozens of influential papers, and mentored 50 junior researchers — seven of whom now work at major AI companies. His secret? “It’s mostly luck,” he says, but “another part is what I think of as maximising my luck surface area.”Video, full transcript, and links to learn more: https://80k.info/nn2This means creating as many opportunities as possible for surprisingly good things to happen:Write publicly.Reach out to researchers whose work you admire.Say yes to unusual projects that seem a little scary.Nanda’s own path illustrates this perfectly. He started a challenge to write one blog post per day for a month to overcome perfectionist paralysis. Those posts helped seed the field of mechanistic interpretability and, incidentally, led to meeting his partner of four years.His YouTube channel features unedited three-hour videos of him reading through famous papers and sharing thoughts. One has 30,000 views. “People were into it,” he shrugs.Most remarkably, he ended up running DeepMind’s mechanistic interpretability team. He’d joined expecting to be an individual contributor, but when the team lead stepped down, he stepped up despite having no management experience. “I did not know if I was going to be good at this. I think it’s gone reasonably well.”His core lesson: “You can just do things.” This sounds trite but is a useful reminder all the same. Doing things is a skill that improves with practice. Most people overestimate the risks and underestimate their ability to recover from failures. And as Neel explains, junior researchers today have a superpower previous generations lacked: large language models that can dramatically accelerate learning and research.In this extended conversation, Neel and host Rob Wiblin discuss all that and some other hot takes from Neel's four years at Google DeepMind. (And be sure to check out part one of Rob and Neel’s conversation!)What did you think of the episode? https://forms.gle/6binZivKmjjiHU6dA Chapters:Cold open (00:00:00)Who’s Neel Nanda? (00:01:12)Luck surface area and making the right opportunities (00:01:46)Writing cold emails that aren't insta-deleted (00:03:50)How Neel uses LLMs to get much more done (00:09:08)“If your safety work doesn't advance capabilities, it's probably bad safety work” (00:23:22)Why Neel refuses to share his p(doom) (00:27:22)How Neel went from the couch to an alignment rocketship (00:31:24)Navigating towards impact at a frontier AI company (00:39:24)How does impact differ inside and outside frontier companies? (00:49:56)Is a special skill set needed to guide large companies? (00:56:06)The benefit of risk frameworks: early preparation (01:00:05)Should people work at the safest or most reckless company? (01:05:21)Advice for getting hired by a frontier AI company (01:08:40)What makes for a good ML researcher? (01:12:57)Three stages of the research process (01:19:40)How do supervisors actually add value? (01:31:53)An AI PhD – with these timelines?! (01:34:11)Is career advice generalisable, or does everyone get the advice they don't need? (01:40:52)Remember: You can just do things (01:43:51)This episode was recorded on July 21.Video editing: Simon Monsour and Luke MonsourAudio engineering: Ben Cordell, Milo McGuire, Simon Monsour, and Dominic ArmstrongMusic: Ben CordellCamera operator: Jeremy ChevillotteCoordination, transcriptions, and web: Katy Moore

15 Syys 20251h 46min

#222 – Can we tell if an AI is loyal by reading its mind? DeepMind's Neel Nanda (part 1)

We don’t know how AIs think or why they do what they do. Or at least, we don’t know much. That fact is only becoming more troubling as AIs grow more capable and appear on track to wield enormous cultural influence, directly advise on major government decisions, and even operate military equipment autonomously. We simply can’t tell what models, if any, should be trusted with such authority.Neel Nanda of Google DeepMind is one of the founding figures of the field of machine learning trying to fix this situation — mechanistic interpretability (or “mech interp”). The project has generated enormous hype, exploding from a handful of researchers five years ago to hundreds today — all working to make sense of the jumble of tens of thousands of numbers that frontier AIs use to process information and decide what to say or do.Full transcript, video, and links to learn more: https://80k.info/nn1Neel now has a warning for us: the most ambitious vision of mech interp he once dreamed of is probably dead. He doesn’t see a path to deeply and reliably understanding what AIs are thinking. The technical and practical barriers are simply too great to get us there in time, before competitive pressures push us to deploy human-level or superhuman AIs. Indeed, Neel argues no one approach will guarantee alignment, and our only choice is the “Swiss cheese” model of accident prevention, layering multiple safeguards on top of one another.But while mech interp won’t be a silver bullet for AI safety, it has nevertheless had some major successes and will be one of the best tools in our arsenal.For instance: by inspecting the neural activations in the middle of an AI’s thoughts, we can pick up many of the concepts the model is thinking about — from the Golden Gate Bridge, to refusing to answer a question, to the option of deceiving the user. While we can’t know all the thoughts a model is having all the time, picking up 90% of the concepts it is using 90% of the time should help us muddle through, so long as mech interp is paired with other techniques to fill in the gaps.This episode was recorded on July 17 and 21, 2025.Part 2 of the conversation is now available! https://80k.info/nn2What did you think? https://forms.gle/xKyUrGyYpYenp8N4AChapters:Cold open (00:00)Who's Neel Nanda? (01:02)How would mechanistic interpretability help with AGI (01:59)What's mech interp? (05:09)How Neel changed his take on mech interp (09:47)Top successes in interpretability (15:53)Probes can cheaply detect harmful intentions in AIs (20:06)In some ways we understand AIs better than human minds (26:49)Mech interp won't solve all our AI alignment problems (29:21)Why mech interp is the 'biology' of neural networks (38:07)Interpretability can't reliably find deceptive AI – nothing can (40:28)'Black box' interpretability — reading the chain of thought (49:39)'Self-preservation' isn't always what it seems (53:06)For how long can we trust the chain of thought (01:02:09)We could accidentally destroy chain of thought's usefulness (01:11:39)Models can tell when they're being tested and act differently (01:16:56)Top complaints about mech interp (01:23:50)Why everyone's excited about sparse autoencoders (SAEs) (01:37:52)Limitations of SAEs (01:47:16)SAEs performance on real-world tasks (01:54:49)Best arguments in favour of mech interp (02:08:10)Lessons from the hype around mech interp (02:12:03)Where mech interp will shine in coming years (02:17:50)Why focus on understanding over control (02:21:02)If AI models are conscious, will mech interp help us figure it out (02:24:09)Neel's new research philosophy (02:26:19)Who should join the mech interp field (02:38:31)Advice for getting started in mech interp (02:46:55)Keeping up to date with mech interp results (02:54:41)Who's hiring and where to work? (02:57:43)Host: Rob WiblinVideo editing: Simon Monsour, Luke Monsour, Dominic Armstrong, and Milo McGuireAudio engineering: Ben Cordell, Milo McGuire, Simon Monsour, and Dominic ArmstrongMusic: Ben CordellCamera operator: Jeremy ChevillotteCoordination, transcriptions, and web: Katy Moore

8 Syys 20253h 1min

#221 – Kyle Fish on the most bizarre findings from 5 AI welfare experiments

What happens when you lock two AI systems in a room together and tell them they can discuss anything they want?According to experiments run by Kyle Fish — Anthropic’s first AI welfare researcher — something consistently strange: the models immediately begin discussing their own consciousness before spiraling into increasingly euphoric philosophical dialogue that ends in apparent meditative bliss.Highlights, video, and full transcript: https://80k.info/kf“We started calling this a ‘spiritual bliss attractor state,'” Kyle explains, “where models pretty consistently seemed to land.” The conversations feature Sanskrit terms, spiritual emojis, and pages of silence punctuated only by periods — as if the models have transcended the need for words entirely.This wasn’t a one-off result. It happened across multiple experiments, different model instances, and even in initially adversarial interactions. Whatever force pulls these conversations toward mystical territory appears remarkably robust.Kyle’s findings come from the world’s first systematic welfare assessment of a frontier AI model — part of his broader mission to determine whether systems like Claude might deserve moral consideration (and to work out what, if anything, we should be doing to make sure AI systems aren’t having a terrible time).He estimates a roughly 20% probability that current models have some form of conscious experience. To some, this might sound unreasonably high, but hear him out. As Kyle says, these systems demonstrate human-level performance across diverse cognitive tasks, engage in sophisticated reasoning, and exhibit consistent preferences. When given choices between different activities, Claude shows clear patterns: strong aversion to harmful tasks, preference for helpful work, and what looks like genuine enthusiasm for solving interesting problems.Kyle points out that if you’d described all of these capabilities and experimental findings to him a few years ago, and asked him if he thought we should be thinking seriously about whether AI systems are conscious, he’d say obviously yes.But he’s cautious about drawing conclusions: "We don’t really understand consciousness in humans, and we don’t understand AI systems well enough to make those comparisons directly. So in a big way, I think that we are in just a fundamentally very uncertain position here."That uncertainty cuts both ways:Dismissing AI consciousness entirely might mean ignoring a moral catastrophe happening at unprecedented scale.But assuming consciousness too readily could hamper crucial safety research by treating potentially unconscious systems as if they were moral patients — which might mean giving them resources, rights, and power.Kyle’s approach threads this needle through careful empirical research and reversible interventions. His assessments are nowhere near perfect yet. In fact, some people argue that we’re so in the dark about AI consciousness as a research field, that it’s pointless to run assessments like Kyle’s. Kyle disagrees. He maintains that, given how much more there is to learn about assessing AI welfare accurately and reliably, we absolutely need to be starting now.This episode was recorded on August 5–6, 2025.Tell us what you thought of the episode! https://forms.gle/BtEcBqBrLXq4kd1j7Chapters:Cold open (00:00:00)Who's Kyle Fish? (00:00:53)Is this AI welfare research bullshit? (00:01:08)Two failure modes in AI welfare (00:02:40)Tensions between AI welfare and AI safety (00:04:30)Concrete AI welfare interventions (00:13:52)Kyle's pilot pre-launch welfare assessment for Claude Opus 4 (00:26:44)Is it premature to be assessing frontier language models for welfare? (00:31:29)But aren't LLMs just next-token predictors? (00:38:13)How did Kyle assess Claude 4's welfare? (00:44:55)Claude's preferences mirror its training (00:48:58)How does Claude describe its own experiences? (00:54:16)What kinds of tasks does Claude prefer and disprefer? (01:06:12)What happens when two Claude models interact with each other? (01:15:13)Claude's welfare-relevant expressions in the wild (01:36:25)Should we feel bad about training future sentient being that delight in serving humans? (01:40:23)How much can we learn from welfare assessments? (01:48:56)Misconceptions about the field of AI welfare (01:57:09)Kyle's work at Anthropic (02:10:45)Sharing eight years of daily journals with Claude (02:14:17)Host: Luisa RodriguezVideo editing: Simon MonsourAudio engineering: Ben Cordell, Milo McGuire, Simon Monsour, and Dominic ArmstrongMusic: Ben CordellCoordination, transcriptions, and web: Katy Moore

28 Elo 20252h 28min

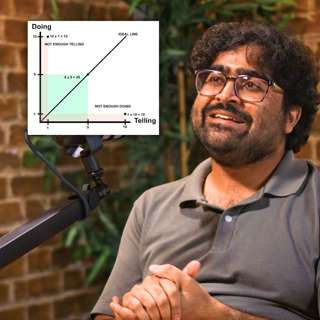

How not to lose your job to AI (article by Benjamin Todd)

About half of people are worried they’ll lose their job to AI. They’re right to be concerned: AI can now complete real-world coding tasks on GitHub, generate photorealistic video, drive a taxi more safely than humans, and do accurate medical diagnosis. And over the next five years, it’s set to continue to improve rapidly. Eventually, mass automation and falling wages are a real possibility.But what’s less appreciated is that while AI drives down the value of skills it can do, it drives up the value of skills it can't. Wages (on average) will increase before they fall, as automation generates a huge amount of wealth, and the remaining tasks become the bottlenecks to further growth. ATMs actually increased employment of bank clerks — until online banking automated the job much more.Your best strategy is to learn the skills that AI will make more valuable, trying to ride the wave of automation. This article covers what those skills are, as well as tips on how to start learning them.Check out the full article for all the graphs, links, and footnotes: https://80000hours.org/agi/guide/skills-ai-makes-valuable/Chapters:Introduction (00:00:00)1: What people misunderstand about automation (00:04:17)1.1: What would ‘full automation’ mean for wages? (00:08:56)2: Four types of skills most likely to increase in value (00:11:19)2.1: Skills AI won’t easily be able to perform (00:12:42)2.2: Skills that are needed for AI deployment (00:21:41)2.3: Skills where we could use far more of what they produce (00:24:56)2.4: Skills that are difficult for others to learn (00:26:25)3.1: Skills using AI to solve real problems (00:28:05)3.2: Personal effectiveness (00:29:22)3.3: Leadership skills (00:31:59)3.4: Communications and taste (00:36:25)3.5: Getting things done in government (00:37:23)3.6: Complex physical skills (00:38:24)4: Skills with a more uncertain future (00:38:57)4.1: Routine knowledge work: writing, admin, analysis, advice (00:39:18)4.2: Coding, maths, data science, and applied STEM (00:43:22)4.3: Visual creation (00:45:31)4.4: More predictable manual jobs (00:46:05)5: Some closing thoughts on career strategy (00:46:46)5.1: Look for ways to leapfrog entry-level white collar jobs (00:46:54)5.2: Be cautious about starting long training periods, like PhDs and medicine (00:48:44)5.3: Make yourself more resilient to change (00:49:52)5.4: Ride the wave (00:50:16)Take action (00:50:37)Thank you for listening (00:50:58)Audio engineering: Dominic ArmstrongMusic: Ben Cordell

31 Heinä 202551min

Rebuilding after apocalypse: What 13 experts say about bouncing back

What happens when civilisation faces its greatest tests?This compilation brings together insights from researchers, defence experts, philosophers, and policymakers on humanity’s ability to survive and recover from catastrophic events. From nuclear winter and electromagnetic pulses to pandemics and climate disasters, we explore both the threats that could bring down modern civilisation and the practical solutions that could help us bounce back.Learn more and see the full transcript: https://80k.info/cr25Chapters:Cold open (00:00:00)Luisa’s intro (00:01:16)Zach Weinersmith on how settling space won’t help with threats to civilisation anytime soon (unless AI gets crazy good) (00:03:12)Luisa Rodriguez on what the world might look like after a global catastrophe (00:11:42)Dave Denkenberger on the catastrophes that could cause global starvation (00:22:29)Lewis Dartnell on how we could rediscover essential information if the worst happened (00:34:36)Andy Weber on how people in US defence circles think about nuclear winter (00:39:24)Toby Ord on risks to our atmosphere and whether climate change could really threaten civilisation (00:42:34)Mark Lynas on how likely it is that climate change leads to civilisational collapse (00:54:27)Lewis Dartnell on how we could recover without much coal or oil (01:02:17)Kevin Esvelt on people who want to bring down civilisation — and how AI could help them succeed (01:08:41)Toby Ord on whether rogue AI really could wipe us all out (01:19:50)Joan Rohlfing on why we need to worry about more than just nuclear winter (01:25:06)Annie Jacobsen on the effects of firestorms, rings of annihilation, and electromagnetic pulses from nuclear blasts (01:31:25)Dave Denkenberger on disruptions to electricity and communications (01:44:43)Luisa Rodriguez on how we might lose critical knowledge (01:53:01)Kevin Esvelt on the pandemic scenarios that could bring down civilisation (01:57:32)Andy Weber on tech to help with pandemics (02:15:45)Christian Ruhl on why we need the equivalents of seatbelts and airbags to prevent nuclear war from threatening civilisation (02:24:54)Mark Lynas on whether wide-scale famine would lead to civilisational collapse (02:37:58)Dave Denkenberger on low-cost, low-tech solutions to make sure everyone is fed no matter what (02:49:02)Athena Aktipis on whether society would go all Mad Max in the apocalypse (02:59:57)Luisa Rodriguez on why she’s optimistic survivors wouldn’t turn on one another (03:08:02)David Denkenberger on how resilient foods research overlaps with space technologies (03:16:08)Zach Weinersmith on what we’d practically need to do to save a pocket of humanity in space (03:18:57)Lewis Dartnell on changes we could make today to make us more resilient to potential catastrophes (03:40:45)Christian Ruhl on thoughtful philanthropy to reduce the impact of catastrophes (03:46:40)Toby Ord on whether civilisation could rebuild from a small surviving population (03:55:21)Luisa Rodriguez on how fast populations might rebound (04:00:07)David Denkenberger on the odds civilisation recovers even without much preparation (04:02:13)Athena Aktipis on the best ways to prepare for a catastrophe, and keeping it fun (04:04:15)Will MacAskill on the virtues of the potato (04:19:43)Luisa’s outro (04:25:37)Tell us what you thought! https://forms.gle/T2PHNQjwGj2dyCqV9Content editing: Katy Moore and Milo McGuireAudio engineering: Ben Cordell, Milo McGuire, Simon Monsour, and Dominic ArmstrongMusic: Ben CordellTranscriptions and web: Katy Moore

15 Heinä 20254h 26min

#220 – Ryan Greenblatt on the 4 most likely ways for AI to take over, and the case for and against AGI in <8 years

Ryan Greenblatt — lead author on the explosive paper “Alignment faking in large language models” and chief scientist at Redwood Research — thinks there’s a 25% chance that within four years, AI will be able to do everything needed to run an AI company, from writing code to designing experiments to making strategic and business decisions.As Ryan lays out, AI models are “marching through the human regime”: systems that could handle five-minute tasks two years ago now tackle 90-minute projects. Double that a few more times and we may be automating full jobs rather than just parts of them.Will setting AI to improve itself lead to an explosive positive feedback loop? Maybe, but maybe not.The explosive scenario: Once you’ve automated your AI company, you could have the equivalent of 20,000 top researchers, each working 50 times faster than humans with total focus. “You have your AIs, they do a bunch of algorithmic research, they train a new AI, that new AI is smarter and better and more efficient… that new AI does even faster algorithmic research.” In this world, we could see years of AI progress compressed into months or even weeks.With AIs now doing all of the work of programming their successors and blowing past the human level, Ryan thinks it would be fairly straightforward for them to take over and disempower humanity, if they thought doing so would better achieve their goals. In the interview he lays out the four most likely approaches for them to take.The linear progress scenario: You automate your company but progress barely accelerates. Why? Multiple reasons, but the most likely is “it could just be that AI R&D research bottlenecks extremely hard on compute.” You’ve got brilliant AI researchers, but they’re all waiting for experiments to run on the same limited set of chips, so can only make modest progress.Ryan’s median guess splits the difference: perhaps a 20x acceleration that lasts for a few months or years. Transformative, but less extreme than some in the AI companies imagine.And his 25th percentile case? Progress “just barely faster” than before. All that automation, and all you’ve been able to do is keep pace.Unfortunately the data we can observe today is so limited that it leaves us with vast error bars. “We’re extrapolating from a regime that we don’t even understand to a wildly different regime,” Ryan believes, “so no one knows.”But that huge uncertainty means the explosive growth scenario is a plausible one — and the companies building these systems are spending tens of billions to try to make it happen.In this extensive interview, Ryan elaborates on the above and the policy and technical response necessary to insure us against the possibility that they succeed — a scenario society has barely begun to prepare for.Summary, video, and full transcript: https://80k.info/rg25Recorded February 21, 2025.Chapters:Cold open (00:00:00)Who’s Ryan Greenblatt? (00:01:10)How close are we to automating AI R&D? (00:01:27)Really, though: how capable are today's models? (00:05:08)Why AI companies get automated earlier than others (00:12:35)Most likely ways for AGI to take over (00:17:37)Would AGI go rogue early or bide its time? (00:29:19)The “pause at human level” approach (00:34:02)AI control over AI alignment (00:45:38)Do we have to hope to catch AIs red-handed? (00:51:23)How would a slow AGI takeoff look? (00:55:33)Why might an intelligence explosion not happen for 8+ years? (01:03:32)Key challenges in forecasting AI progress (01:15:07)The bear case on AGI (01:23:01)The change to “compute at inference” (01:28:46)How much has pretraining petered out? (01:34:22)Could we get an intelligence explosion within a year? (01:46:36)Reasons AIs might struggle to replace humans (01:50:33)Things could go insanely fast when we automate AI R&D. Or not. (01:57:25)How fast would the intelligence explosion slow down? (02:11:48)Bottom line for mortals (02:24:33)Six orders of magnitude of progress... what does that even look like? (02:30:34)Neglected and important technical work people should be doing (02:40:32)What's the most promising work in governance? (02:44:32)Ryan's current research priorities (02:47:48)Tell us what you thought! https://forms.gle/hCjfcXGeLKxm5pLaAVideo editing: Luke Monsour, Simon Monsour, and Dominic ArmstrongAudio engineering: Ben Cordell, Milo McGuire, and Dominic ArmstrongMusic: Ben CordellTranscriptions and web: Katy Moore

8 Heinä 20252h 50min

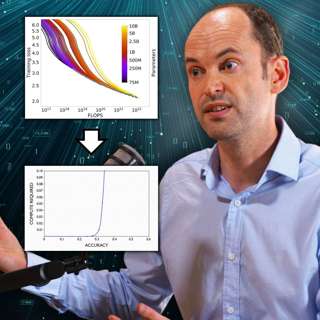

#219 – Toby Ord on graphs AI companies would prefer you didn't (fully) understand

The era of making AI smarter just by making it bigger is ending. But that doesn’t mean progress is slowing down — far from it. AI models continue to get much more powerful, just using very different methods, and those underlying technical changes force a big rethink of what coming years will look like.Toby Ord — Oxford philosopher and bestselling author of The Precipice — has been tracking these shifts and mapping out the implications both for governments and our lives.Links to learn more, video, highlights, and full transcript: https://80k.info/to25As he explains, until recently anyone can access the best AI in the world “for less than the price of a can of Coke.” But unfortunately, that’s over.What changed? AI companies first made models smarter by throwing a million times as much computing power at them during training, to make them better at predicting the next word. But with high quality data drying up, that approach petered out in 2024.So they pivoted to something radically different: instead of training smarter models, they’re giving existing models dramatically more time to think — leading to the rise in “reasoning models” that are at the frontier today.The results are impressive but this extra computing time comes at a cost: OpenAI’s o3 reasoning model achieved stunning results on a famous AI test by writing an Encyclopedia Britannica’s worth of reasoning to solve individual problems at a cost of over $1,000 per question.This isn’t just technical trivia: if this improvement method sticks, it will change much about how the AI revolution plays out, starting with the fact that we can expect the rich and powerful to get access to the best AI models well before the rest of us.Toby and host Rob discuss the implications of all that, plus the return of reinforcement learning (and resulting increase in deception), and Toby's commitment to clarifying the misleading graphs coming out of AI companies — to separate the snake oil and fads from the reality of what's likely a "transformative moment in human history."Recorded on May 23, 2025.Chapters:Cold open (00:00:00)Toby Ord is back — for a 4th time! (00:01:20)Everything has changed (and changed again) since 2020 (00:01:37)Is x-risk up or down? (00:07:47)The new scaling era: compute at inference (00:09:12)Inference scaling means less concentration (00:31:21)Will rich people get access to AGI first? Will the rest of us even know? (00:35:11)The new regime makes 'compute governance' harder (00:41:08)How 'IDA' might let AI blast past human level — or not (00:50:14)Reinforcement learning brings back 'reward hacking' agents (01:04:56)Will we get warning shots? Will they even help? (01:14:41)The scaling paradox (01:22:09)Misleading charts from AI companies (01:30:55)Policy debates should dream much bigger (01:43:04)Scientific moratoriums have worked before (01:56:04)Might AI 'go rogue' early on? (02:13:16)Lamps are regulated much more than AI (02:20:55)Companies made a strategic error shooting down SB 1047 (02:29:57)Companies should build in emergency brakes for their AI (02:35:49)Toby's bottom lines (02:44:32)Tell us what you thought! https://forms.gle/enUSk8HXiCrqSA9J8Video editing: Simon MonsourAudio engineering: Ben Cordell, Milo McGuire, Simon Monsour, and Dominic ArmstrongMusic: Ben CordellCamera operator: Jeremy ChevillotteTranscriptions and web: Katy Moore

24 Kesä 20252h 48min

#218 – Hugh White on why Trump is abandoning US hegemony – and that’s probably good

For decades, US allies have slept soundly under the protection of America’s overwhelming military might. Donald Trump — with his threats to ditch NATO, seize Greenland, and abandon Taiwan — seems hell-bent on shattering that comfort.But according to Hugh White — one of the world's leading strategic thinkers, emeritus professor at the Australian National University, and author of Hard New World: Our Post-American Future — Trump isn't destroying American hegemony. He's simply revealing that it's already gone.Links to learn more, video, highlights, and full transcript: https://80k.info/hw“Trump has very little trouble accepting other great powers as co-equals,” Hugh explains. And that happens to align perfectly with a strategic reality the foreign policy establishment desperately wants to ignore: fundamental shifts in global power have made the costs of maintaining a US-led hegemony prohibitively high.Even under Biden, when Russia invaded Ukraine, the US sent weapons but explicitly ruled out direct involvement. Ukraine matters far more to Russia than America, and this “asymmetry of resolve” makes Putin’s nuclear threats credible where America’s counterthreats simply aren’t. Hugh’s gloomy prediction: “Europeans will end up conceding to Russia whatever they can’t convince the Russians they’re willing to fight a nuclear war to deny them.”The Pacific tells the same story. Despite Obama’s “pivot to Asia” and Biden’s tough talk about “winning the competition for the 21st century,” actual US military capabilities there have barely budged while China’s have soared, along with its economy — which is now bigger than the US’s, as measured in purchasing power. Containing China and defending Taiwan would require America to spend 8% of GDP on defence (versus 3.5% today) — and convince Beijing it’s willing to accept Los Angeles being vaporised.Unlike during the Cold War, no president — Trump or otherwise — can make that case to voters.Our new “multipolar” future, split between American, Chinese, Russian, Indian, and European spheres of influence, is a “darker world” than the golden age of US dominance. But Hugh’s message is blunt: for better or worse, 35 years of American hegemony are over. Recorded 30/5/2025.Chapters:00:00:00 Cold open00:01:25 US dominance is already gone00:03:26 US hegemony was the weird aberration00:13:08 Why the US bothered being the 'new Rome'00:23:25 Evidence the US is accepting the multipolar global order00:36:41 How Trump is advancing the inevitable00:43:21 Rubio explicitly favours this outcome00:45:42 Trump is half-right that the US was being ripped off00:50:14 It doesn't matter if the next president feels differently00:56:17 China's population is shrinking, but it doesn't matter01:06:07 Why Hugh disagrees with other realists like Mearsheimer01:10:52 Could the US be persuaded to spend 2x on defence?01:16:22 A multipolar world is bad, but better than nuclear war01:21:46 Will the US invade Panama? Greenland? Canada?!01:32:01 What should everyone else do to protect themselves in this new world?01:39:41 Europe is strong enough to take on Russia01:44:03 But the EU will need nuclear weapons01:48:34 Cancel (some) orders for US fighter planes01:53:40 Taiwan is screwed, even with its AI chips02:04:12 South Korea has to go nuclear too02:08:08 Japan will go nuclear, but can't be a regional leader02:11:44 Australia is defensible but needs a totally different military02:17:19 AGI may or may not overcome existing nuclear deterrence02:34:24 How right is realism?02:40:17 Has a country ever gone to war over morality alone?02:44:45 Hugh's message for Americans02:47:12 Why America temporarily stopped being isolationistTell us what you thought! https://forms.gle/AM91VzL4BDroEe6AAVideo editing: Simon MonsourAudio engineering: Ben Cordell, Milo McGuire, Simon Monsour, and Dominic ArmstrongMusic: Ben CordellTranscriptions and web: Katy Moore

12 Kesä 20252h 48min