#73 – Phil Trammell on patient philanthropy and waiting to do good

To do good, most of us look to use our time and money to affect the world around us today. But perhaps that's all wrong. If you took $1,000 you were going to donate and instead put it in the stock mar...

17 Maalis 20202h 35min

#72 - Toby Ord on the precipice and humanity's potential futures

This week Oxford academic and 80,000 Hours trustee Dr Toby Ord released his new book The Precipice: Existential Risk and the Future of Humanity. It's about how our long-term future could be better tha...

7 Maalis 20203h 14min

#71 - Benjamin Todd on the key ideas of 80,000 Hours

The 80,000 Hours Podcast is about “the world’s most pressing problems and how you can use your career to solve them”, and in this episode we tackle that question in the most direct way possible. Las...

2 Maalis 20202h 57min

Arden & Rob on demandingness, work-life balance & injustice (80k team chat #1)

Today's bonus episode of the podcast is a quick conversation between me and my fellow 80,000 Hours researcher Arden Koehler about a few topics, including the demandingness of morality, work-life balan...

25 Helmi 202044min

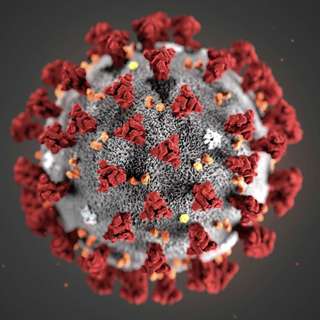

#70 - Dr Cassidy Nelson on the 12 best ways to stop the next pandemic (and limit nCoV)

nCoV is alarming governments and citizens around the world. It has killed more than 1,000 people, brought the Chinese economy to a standstill, and continues to show up in more and more places. But bad...

13 Helmi 20202h 26min

#69 – Jeffrey Ding on China, its AI dream, and what we get wrong about both

The State Council of China's 2017 AI plan was the starting point of China’s AI planning; China’s approach to AI is defined by its top-down and monolithic nature; China is winning the AI arms race; and...

6 Helmi 20201h 37min

Rob & Howie on what we do and don't know about 2019-nCoV

Two 80,000 Hours researchers, Robert Wiblin and Howie Lempel, record an experimental bonus episode about the new 2019-nCoV virus.See this list of resources, including many discussed in the episode, to...

3 Helmi 20201h 18min

#68 - Will MacAskill on the paralysis argument, whether we're at the hinge of history, & his new priorities

You’re given a box with a set of dice in it. If you roll an even number, a person's life is saved. If you roll an odd number, someone else will die. Each time you shake the box you get $10. Should you...

24 Tammi 20203h 25min