#204 – Nate Silver on making sense of SBF, and his biggest critiques of effective altruism

Rob Wiblin speaks with FiveThirtyEight election forecaster and author Nate Silver about his new book: On the Edge: The Art of Risking Everything.Links to learn more, highlights, video, and full transc...

16 Loka 20241h 57min

#203 – Peter Godfrey-Smith on interfering with wild nature, accepting death, and the origin of complex civilisation

"In the human case, it would be mistaken to give a kind of hour-by-hour accounting. You know, 'I had +4 level of experience for this hour, then I had -2 for the next hour, and then I had -1' — and you...

3 Loka 20241h 25min

Luisa and Keiran on free will, and the consequences of never feeling enduring guilt or shame

In this episode from our second show, 80k After Hours, Luisa Rodriguez and Keiran Harris chat about the consequences of letting go of enduring guilt, shame, anger, and pride.Links to learn more, highl...

27 Syys 20241h 36min

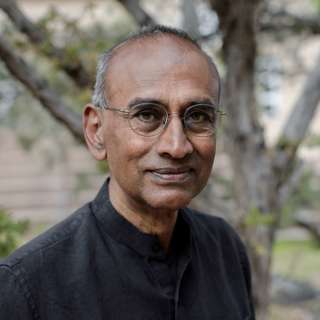

#202 – Venki Ramakrishnan on the cutting edge of anti-ageing science

"For every far-out idea that turns out to be true, there were probably hundreds that were simply crackpot ideas. In general, [science] advances building on the knowledge we have, and seeing what the n...

19 Syys 20242h 20min

#201 – Ken Goldberg on why your robot butler isn’t here yet

"Perception is quite difficult with cameras: even if you have a stereo camera, you still can’t really build a map of where everything is in space. It’s just very difficult. And I know that sounds surp...

13 Syys 20242h 1min

#200 – Ezra Karger on what superforecasters and experts think about existential risks

"It’s very hard to find examples where people say, 'I’m starting from this point. I’m starting from this belief.' So we wanted to make that very legible to people. We wanted to say, 'Experts think thi...

4 Syys 20242h 49min

#199 – Nathan Calvin on California’s AI bill SB 1047 and its potential to shape US AI policy

"I do think that there is a really significant sentiment among parts of the opposition that it’s not really just that this bill itself is that bad or extreme — when you really drill into it, it feels ...

29 Elo 20241h 12min

#198 – Meghan Barrett on upending everything you thought you knew about bugs in 3 hours

"This is a group of animals I think people are particularly unfamiliar with. They are especially poorly covered in our science curriculum; they are especially poorly understood, because people don’t s...

26 Elo 20243h 48min