![#73 - Phil Trammell on patient philanthropy and waiting to do good [re-release]](https://cdn.podme.com/podcast-images/BC5303E5E91AE22CC381B9A2DC45C8DE_small.jpg)

#73 - Phil Trammell on patient philanthropy and waiting to do good [re-release]

Rebroadcast: this episode was originally released in March 2020. To do good, most of us look to use our time and money to affect the world around us today. But perhaps that's all wrong. If you too...

7 Tammi 20212h 41min

![#75 – Michelle Hutchinson on what people most often ask 80,000 Hours [re-release]](https://cdn.podme.com/podcast-images/E70311DF12F9F2966C1C491FB2BEBF89_small.jpg)

#75 – Michelle Hutchinson on what people most often ask 80,000 Hours [re-release]

Rebroadcast: this episode was originally released in April 2020. Since it was founded, 80,000 Hours has done one-on-one calls to supplement our online content and offer more personalised advice. We ...

30 Joulu 20202h 14min

#89 – Owen Cotton-Barratt on epistemic systems and layers of defense against potential global catastrophes

From one point of view academia forms one big 'epistemic' system — a process which directs attention, generates ideas, and judges which are good. Traditional print media is another such system, and we...

17 Joulu 20202h 38min

#88 – Tristan Harris on the need to change the incentives of social media companies

In its first 28 days on Netflix, the documentary The Social Dilemma — about the possible harms being caused by social media and other technology products — was seen by 38 million households in about 1...

3 Joulu 20202h 35min

Benjamin Todd on what the effective altruism community most needs (80k team chat #4)

In the last '80k team chat' with Ben Todd and Arden Koehler, we discussed what effective altruism is and isn't, and how to argue for it. In this episode we turn now to what the effective altruism comm...

12 Marras 20201h 25min

#87 – Russ Roberts on whether it's more effective to help strangers, or people you know

If you want to make the world a better place, would it be better to help your niece with her SATs, or try to join the State Department to lower the risk that the US and China go to war? People involve...

3 Marras 20201h 49min

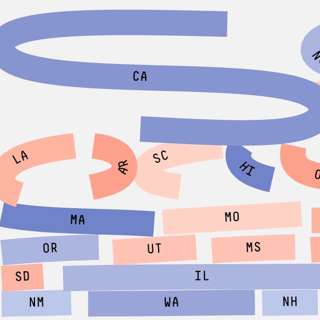

How much does a vote matter? (Article)

Today’s release is the latest in our series of audio versions of our articles.In this one — How much does a vote matter? — I investigate the two key things that determine the impact of your vote: • ...

29 Loka 202031min

#86 – Hilary Greaves on Pascal's mugging, strong longtermism, and whether existing can be good for us

Had World War 1 never happened, you might never have existed. It’s very unlikely that the exact chain of events that led to your conception would have happened otherwise — so perhaps you wouldn't have...

21 Loka 20202h 24min