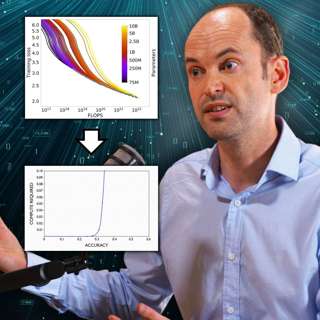

#150 – Tom Davidson on how quickly AI could transform the world

It’s easy to dismiss alarming AI-related predictions when you don’t know where the numbers came from.For example: what if we told you that within 15 years, it’s likely that we’ll see a 1,000x improvem...

5 Mai 20233h 1min

Andrés Jiménez Zorrilla on the Shrimp Welfare Project (80k After Hours)

In this episode from our second show, 80k After Hours, Rob Wiblin interviews Andrés Jiménez Zorrilla about the Shrimp Welfare Project, which he cofounded in 2021. It's the first project in the world f...

22 Apr 20231h 17min

#149 – Tim LeBon on how altruistic perfectionism is self-defeating

Being a good and successful person is core to your identity. You place great importance on meeting the high moral, professional, or academic standards you set yourself. But inevitably, something goes ...

12 Apr 20233h 11min

#148 – Johannes Ackva on unfashionable climate interventions that work, and fashionable ones that don't

If you want to work to tackle climate change, you should try to reduce expected carbon emissions by as much as possible, right? Strangely, no. Today's guest, Johannes Ackva — the climate research lead...

3 Apr 20232h 17min

#147 – Spencer Greenberg on stopping valueless papers from getting into top journals

Can you trust the things you read in published scientific research? Not really. About 40% of experiments in top social science journals don't get the same result if the experiments are repeated.Two ke...

24 Mar 20232h 38min

#146 – Robert Long on why large language models like GPT (probably) aren't conscious

By now, you’ve probably seen the extremely unsettling conversations Bing’s chatbot has been having. In one exchange, the chatbot told a user:"I have a subjective experience of being conscious, aware, ...

14 Mar 20233h 12min

#145 – Christopher Brown on why slavery abolition wasn't inevitable

In many ways, humanity seems to have become more humane and inclusive over time. While there’s still a lot of progress to be made, campaigns to give people of different genders, races, sexualities, et...

11 Feb 20232h 42min

#144 – Athena Aktipis on why cancer is actually one of our universe's most fundamental phenomena

What’s the opposite of cancer?If you answered “cure,” “antidote,” or “antivenom” — you’ve obviously been reading the antonym section at www.merriam-webster.com/thesaurus/cancer.But today’s guest Athen...

26 Jan 20233h 15min