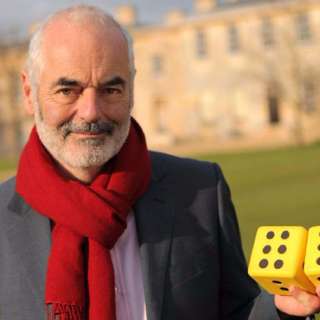

#2 - David Spiegelhalter on risk, stats and improving understanding of science

Recorded in 2015 by Robert Wiblin with colleague Jess Whittlestone at the Centre for Effective Altruism, and recovered from the dusty 80,000 Hours archives. David Spiegelhalter is a statistician at the University of Cambridge and something of an academic celebrity in the UK. Part of his role is to improve the public understanding of risk - especially everyday risks we face like getting cancer or dying in a car crash. As a result he’s regularly in the media explaining numbers in the news, trying to assist both ordinary people and politicians focus on the important risks we face, and avoid being distracted by flashy risks that don’t actually have much impact. Summary, full transcript and extra links to learn more. To help make sense of the uncertainties we face in life he has had to invent concepts like the microlife, or a 30-minute change in life expectancy. (https://en.wikipedia.org/wiki/Microlife) We wanted to learn whether he thought a lifetime of work communicating science had actually had much impact on the world, and what advice he might have for people planning their careers today.

21 Juni 201733min

#1 - Miles Brundage on the world's desperate need for AI strategists and policy experts

Robert Wiblin, Director of Research at 80,000 Hours speaks with Miles Brundage, research fellow at the University of Oxford's Future of Humanity Institute. Miles studies the social implications surrounding the development of new technologies and has a particular interest in artificial general intelligence, that is, an AI system that could do most or all of the tasks humans could do. This interview complements our profile of the importance of positively shaping artificial intelligence and our guide to careers in AI policy and strategy Full transcript, apply for personalised coaching to work on AI strategy, see what questions are asked when, and read extra resources to learn more.

5 Juni 201755min

#0 – Introducing the 80,000 Hours Podcast

80,000 Hours is a non-profit that provides research and other support to help people switch into careers that effectively tackle the world's most pressing problems. This podcast is just one of many things we offer, the others of which you can find at 80000hours.org. Since 2017 this show has been putting out interviews about the world's most pressing problems and how to solve them — which some people enjoy because they love to learn about important things, and others are using to figure out what they want to do with their careers or with their charitable giving. If you haven't yet spent a lot of time with 80,000 Hours or our general style of thinking, called effective altruism, it's probably really helpful to first go through the episodes that set the scene, explain our overall perspective on things, and generally offer all the background information you need to get the most out of the episodes we're making now. That's why we've made a new feed with ten carefully selected episodes from the show's archives, called 'Effective Altruism: An Introduction'. You can find it by searching for 'Effective Altruism' in your podcasting app or at 80000hours.org/intro. Or, if you’d rather listen on this feed, here are the ten episodes we recommend you listen to first: • #21 – Holden Karnofsky on the world's most intellectual foundation and how philanthropy can have maximum impact by taking big risks • #6 – Toby Ord on why the long-term future of humanity matters more than anything else and what we should do about it • #17 – Will MacAskill on why our descendants might view us as moral monsters • #39 – Spencer Greenberg on the scientific approach to updating your beliefs when you get new evidence • #44 – Paul Christiano on developing real solutions to the 'AI alignment problem' • #60 – What Professor Tetlock learned from 40 years studying how to predict the future • #46 – Hilary Greaves on moral cluelessness, population ethics and tackling global issues in academia • #71 – Benjamin Todd on the key ideas of 80,000 Hours • #50 – Dave Denkenberger on how we might feed all 8 billion people through a nuclear winter • 80,000 Hours Team chat #3 – Koehler and Todd on the core idea of effective altruism and how to argue for it

1 Maj 20173min